In my previous post, Line Upon Line: Compositional Threat Modeling, I made the case for compositional threat modeling (CTM). In this post, I’ll explore how CTM is already being used unintentionally and why we need to adopt an intentional approach.

It’s one thing to suggest that we should be intentional in our use of CTM, but quite another to assert that we’ve been using it already. That’s very true. Let me explain. I will, of course incorporate a bit of personal history, music and seamless plugs. If you stay with me on this, you’ll be treated to a cross between James Burke– and Carl Sagan-esque tale weaving.

In the early 1980s, I read Ken Thompson‘s Turing Award Lecture, Reflections on Trusting Trust. In it, Thompson describes how the C compiler itself can be compromised in what we would today describe as a supply chain attack. I never again looked at my development tools in the same way. Up until that point in time, I’d never considered that the very tools I used to build secure systems could betray me, but in that lecture, Thompson made it very clear that I’d created a model of the world that was just wrong. And this gets to the heart of why CTM is important.

When we undertake the threat modeling activity, we are reasoning on a model of the design of a system. Note that there were two levels of indirection in that statement. That’s important. Essentially, we’re reasoning on a model of a model. This is the point at which I’ll shamelessly plug Adam Shostack for his shout out to George Box for observing, “All models are wrong but some are useful.” As to why this is an important observation we need to consider what Alan Turing wrote twenty-five years prior in his 1951 paper The Chemical Basis of Morphogenesis.

“This model will be a simplification and an idealization, and consequently a falsification. It is to be hoped that the features retained for discussion are those of greatest importance in the present state of knowledge.”

It’s important to keep in mind that the model is a lie that we hope keeps sufficient features as to be useful. Problems occur when we forget that and start treating the model as though it were the actual system. To quote Alfred Korzibsky, “a map is not the territory.”

In order for us to reason on the security of the design of a system, we discard most of the information regarding both the system and its design. And that’s okay, so long as the features retained for discussion are those of greatest importance in the present state of knowledge with respect to security.

Unfortunately, in the process of our relatively recent adoption of threat modeling as a security activity, we seem to have taken the approach called out in The Alan Parsons Project 1985 album Stereotomy song In the Real World: “Don’t wanna live my life in the real world.” By this I mean that the models that we’re working with contain fewer feature than needed to produce sufficiently expressive results.

This is not to say that all the threat modeling results we get on a daily basis are bad. We can keep the baby and not drink the bath water. We’ve been leaving stuff out. And some of that stuff is important. What’s more important though is that the consumers of the issues identified by the threat models generally believe that we’ve completely juiced that orange.

This brings us back to Ken Thompson and compositional threat modeling. We trust our tools. We trust our operating environments. (Why I use the term operating environment and not operating system is covered in my post Does That Come in a Large? OS Scale in Threat Modeling.) We either leave the operating environment out of the model entirely or treat it as implicitly out of scope. We tend to do the same with open source and third party libraries. This is a bad thing.

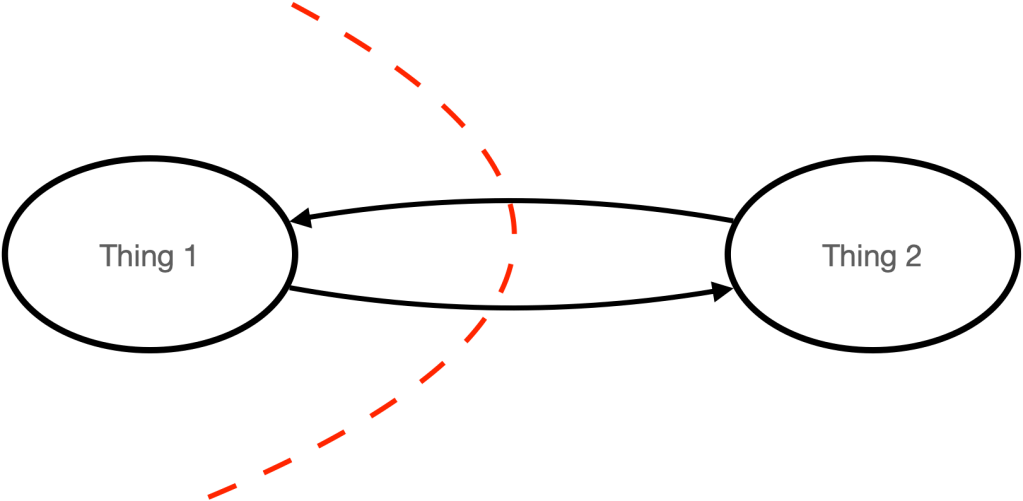

You would be right to argue that including the operating environment or other underlying bits would make our threat models overly complex and hard to maintain. I wouldn’t argue that point. Instead I would argue for treating them as a composition. Do the work to establish why you believe that you can trust them. Then and only then can you safely call them out of scope and move on to working at a highly level of abstraction. That’s the beauty of CTM. Any given threat model is applied only to protect its element. Once you’ve done that, you can compose the elements and deal with what’s shared between them. Typically, that’s not much. If I have two systems A and B and A requires authenticated source and so does B, when I connect them I have bi-directional source/destination authentication by composition. I trust the bits at a lower abstraction level because they have already been modeled and shown to be secure, not because I taken it on faith. As the Russian proverb attributed to Ronald Reagan goes, “trust, but verify.”

When we apply CTM we no longer have big bang threat models. We have manageable composable ones. From the outside the system presents a model with a single surface. That’s important because that’s what an entity interacting with it sees. We don’t see the database underlying a web-hosted site. We see a socket connected protocol. And even that view is an abstraction. It is through composition that we can consider what’s really important to the activity of threat modeling, the application of controls to places where they’re missing, but needed.

In a future post, I’ll get into more specifics as to how to apply CTM.

References

Mariana Trench image by 1840489pavan nd [https://commons.wikimedia.org/wiki/File:Mariana-trench.jpg]

You must be logged in to post a comment.