There’s a lot of confusion in the world of automotive cybersecurity. Who owns cybersecurity? Who does cybersecurity? When do we do cybersecurity? Why do we have to do cybersecurity?

Even when people realize that there is this thing called product cybersecurity (as distinct from IT or OT), they tend to believe that its a pre-development (one-and-done) threat modeling activity, or a post-development (yet also one-and-done) penetration test (destructive QA). In reality, it’s neither of these, but that’s a story for another day.

Today’s misunderstood construct is the cybersecurity management system (CSMS). Yes, per ISO cybersecurity is one word. As the name implies, this is a tracking mechanism. It’s not proof of practice. It’s a proxy for it.

In reality a CSMS overlays multiple other standard management systems that companies are expected to have. These include the document management system (DMS), requirement management system (RMS), and issue tracking system (which for some reason no one has given an acronym). It maps evidence of practice to regulatory requirements.

The CSMS should not be confused with technical standards or regulations. The former codifies specific practices, and the latter governmental expectations (typically related to a companies ability to sell a product into a market).

The CSMS is a box that you use to organize your evidence of practice and compliance. In this way it’s tied into the quality management system (QMS).

It’s important to appreciate that having a CSMS doesn’t mean that the product is secure from a product cybersecurity perspective. Just because you have a revision control system (RCS) doesn’t mean you have a functional application. However, without a CSMS, you’ll be hard pressed to organize the information needed to prove compliance.

If the CSMS doesn’t ensure cybersecurity for the product, what does?

That would be the implementation of a cybersecurity development lifecycle based on best practices. This is sometimes referred to as a secure development lifecycle (SDL), not to be confused with a system development lifecycle (SDLC) or software development lifecycle (also SDLC). That said, a proper cybersecurity development lifecycle will only be effective when built atop both of these.

The cybersecurity development lifecycle provides a comprehensive set of engineering activities, which when implemented, measured, and monitored, produce the best cybersecurity outcomes.

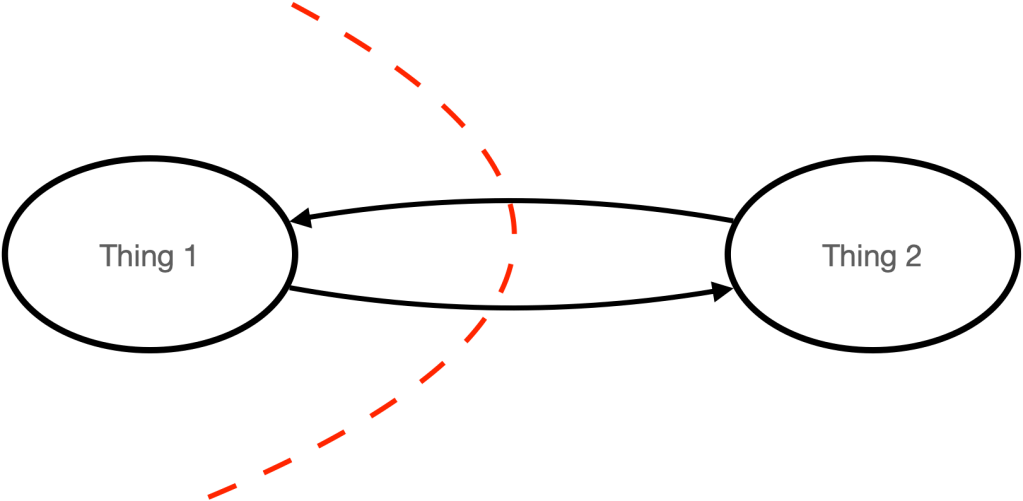

The CSMS implicitly assumes the existence of a cybersecurity development lifecycle. This is because the QMS presumes the implementation of the technical standards for both system and software. These mandate the implementation of system and software development lifecycles. The CSMS presumes conformance to applicable technical standards and these presume the presence of a QMS.

So the next time a company assert that they have a CSMS, ask them whether they have documented system, software, and cybersecurity lifecycles.

In case you’d like to review what a fully-documented cybersecurity development lifecycle looks like, you can check out the AVCDL (A Versatile Cybersecurity Development Lifecycle). It’s been twice assessed for compliance to both ISO/SAE 21434 and UN R155.

You must be logged in to post a comment.